What Is Website Caching and Why Does It Matter?

Caching is one of the most impactful performance optimizations available — and one of the most misunderstood. This guide explains how website caching works, the different types, and how to use them to make your site dramatically faster.

The Secret Behind Fast Websites

Here's a question worth asking: when two websites look identical — same design, same content, same hosting provider — why does one load in under a second while the other takes three? The code might be similar. The servers might be comparable. The images might even be the same size.

Often, the difference comes down to caching. The fast site is serving pre-built responses stored in memory. The slow site is rebuilding every response from scratch on every single request. One is working hard for every visitor. The other is working smart.

Caching is one of the highest-leverage performance techniques available for any website. It's also one of the more commonly misunderstood — most people have heard the advice "clear your cache" when something looks wrong, but far fewer understand what they're actually clearing, why it was there in the first place, and how it affects their site's performance from the server side.

This guide explains caching from the ground up: what it is, the different types that affect your website, how each works, and what you should actually be doing with it to make your site faster.

What Caching Is

Caching is the practice of storing a copy of data in a faster, more accessible location so that future requests for that data can be served from the copy rather than recomputed or re-fetched from the original source.

The principle is simple: if you know you'll be asked for the same information repeatedly, computing it once and saving the answer is faster than computing it every time. The saved answer is the "cache." The process of saving it is "caching." The process of using the saved answer is a "cache hit." The process of discovering the answer isn't saved yet is a "cache miss."

Caching appears throughout computing at every level — your CPU has a cache, your operating system has a file cache, your browser has a cache, web servers have caches, databases have caches, CDNs are essentially sophisticated caches. Each level trades some amount of freshness (the cached answer might be slightly outdated) for significantly improved speed.

For websites, caching works at several distinct layers, each with different mechanics, different control mechanisms, and different performance implications.

Browser Caching: The Cache Your Visitors Carry With Them

When your browser loads a web page, it downloads the resources that make up that page: HTML files, CSS stylesheets, JavaScript files, images, fonts, videos. These files are stored in your browser's local cache — a folder on your device's hard drive designated for temporarily storing web resources.

The next time you visit the same page, your browser checks its cache before making network requests. If the resource is cached and hasn't expired, the browser serves it from local storage instead of downloading it again. A cache hit means no network request, no server load, and delivery that's essentially instant — because the file is already on your device.

This is why revisiting a website is almost always faster than the first visit. First visit: everything downloads from the server. Subsequent visits: most resources load from your local cache, with only changed content downloaded fresh.

How Browser Caching Is Controlled: Cache Headers

Web servers control browser caching behavior through HTTP response headers sent with each resource. The key headers:

Cache-Control — The primary caching directive. Values include:

max-age=31536000— Cache this resource for one year (31,536,000 seconds). Appropriate for immutable assets like versioned JS and CSS files.max-age=3600— Cache for one hour. Appropriate for resources that change occasionally.no-cache— Don't use the cached version without validating with the server first. (Confusingly, this doesn't mean "don't cache" — it means "always revalidate.")no-store— Don't cache this at all. Appropriate for sensitive data like banking information.public— This response can be cached by browsers and intermediate caches (CDNs).private— Only the end user's browser should cache this, not shared caches. Appropriate for personalized content.

ETag — A unique identifier (hash) for the current version of a resource. When a browser has a cached resource and needs to revalidate it, it sends the ETag in an If-None-Match header. If the resource hasn't changed, the server responds with 304 Not Modified (no content downloaded, just a small header response confirming the cache is still valid). If it has changed, the server sends the new resource with a new ETag.

Last-Modified — The timestamp of when the resource was last changed. Similar to ETag but less precise — ETags are preferred because they can detect changes that don't alter the modification timestamp.

The practical strategy: set long cache lifetimes (one year is common) for static assets that rarely change — images, fonts, vendor JS/CSS — and implement a cache-busting strategy (adding version strings or hashes to filenames) so that when files do change, browsers treat them as new resources rather than using the stale cached version.

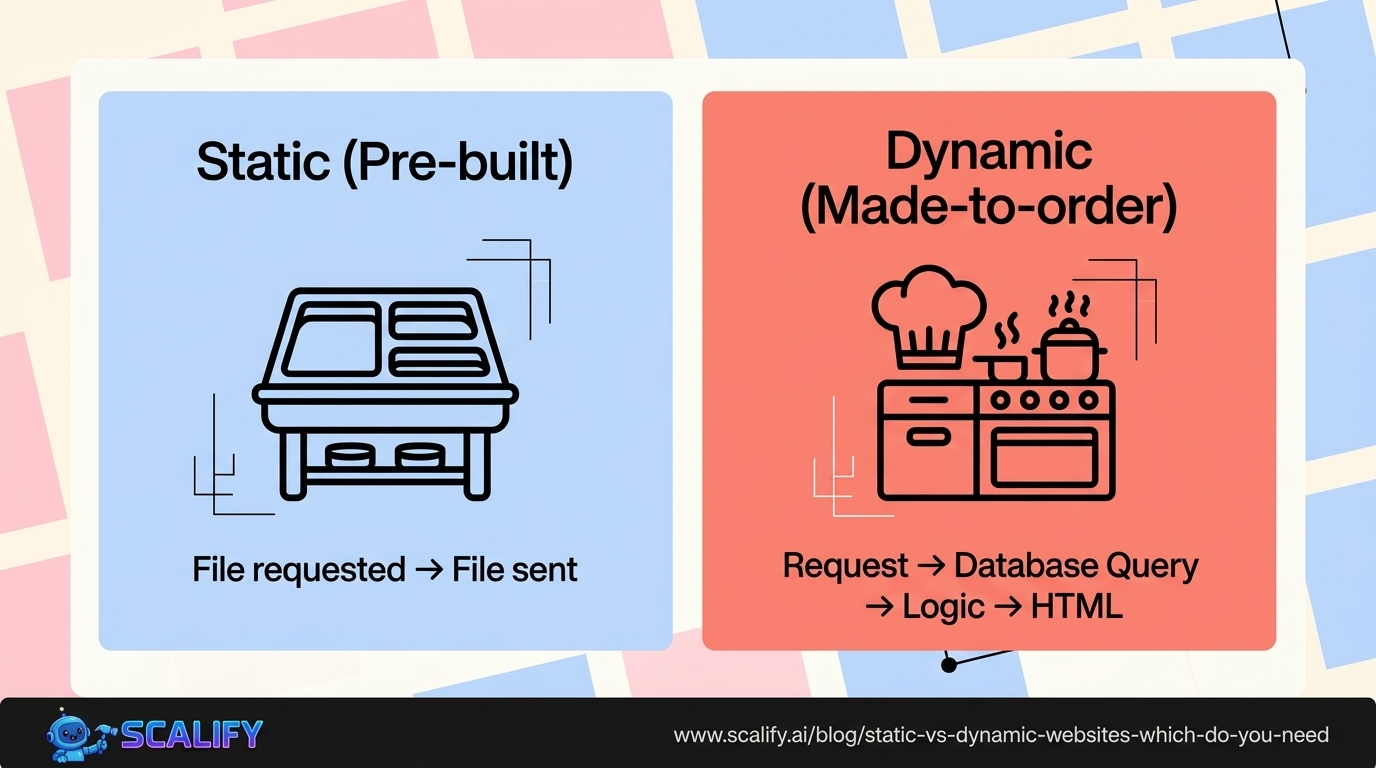

Server-Side Caching: Pre-Building Responses

Dynamic websites generate pages on demand — querying a database, applying templates, running application logic, and assembling HTML for each request. This process is powerful but computationally expensive. A popular page that receives 1,000 requests per hour is re-generating the same HTML 1,000 times, running the same database queries 1,000 times, doing the same work repeatedly even though the output is identical for every visitor.

Server-side caching solves this by storing the generated HTML (or other output) and reusing it for subsequent requests without regenerating it. Different types of server-side caching operate at different levels of the stack:

Full Page Cache (Page Cache)

Stores the complete HTML output of a rendered page. The first request generates the page normally; the output is saved. Subsequent requests within the cache lifetime are served the pre-generated HTML without touching the database or application logic at all.

For WordPress, plugins like WP Rocket, W3 Total Cache, and LiteSpeed Cache implement page caching. For a typical WordPress site, enabling page caching reduces Time to First Byte from 500–1500ms to 50–200ms — a 5–10x improvement for cached pages.

The complexity: full page caches must be intelligently invalidated. If you update a blog post, the cached version of that post page needs to expire so the next visitor sees the updated content. Most caching plugins handle this automatically when content is saved. But logged-in users, users with items in their cart, and pages with dynamic personalization can't usually be served cached pages — the cache must be bypassed for these cases.

Object Cache

Rather than caching entire pages, object caching stores the results of expensive database queries or computation in memory. When the same query or computation is needed again, the cached result is used instead of hitting the database.

Object caching is typically implemented with in-memory stores like Redis or Memcached. These are extremely fast — sub-millisecond response times for cached data, vs. tens or hundreds of milliseconds for database queries. For complex applications with heavy database usage, object caching can reduce database load by 60–90%, dramatically improving response times and server capacity.

Database Query Cache

Many databases have built-in query caching — storing the results of queries and returning cached results when the same query is executed again before the data changes. MySQL had a query cache that was removed in version 8.0 (found to cause more problems than it solved in high-write scenarios); PostgreSQL handles caching at different levels. Application-level object caching (Redis) has largely replaced database-level query caching as the standard approach.

Opcode Cache

PHP (the language WordPress is built on) and other interpreted languages compile source code to bytecode before executing it. Opcode caching stores the compiled bytecode so each request doesn't need to recompile the source code from scratch. PHP's OPcache is built into PHP 5.5+ and dramatically reduces PHP execution time. Enabling it is usually a one-line change in PHP configuration and provides a consistent 30–50% reduction in PHP execution time.

CDN Caching: Geographic Distribution of Cached Assets

A CDN (Content Delivery Network) is a geographically distributed network of servers that caches your website's assets at edge locations around the world. When a visitor in Tokyo loads your site hosted in Virginia, the CDN serves cached assets from the nearest Tokyo edge node rather than fetching them from Virginia — reducing latency from potentially 200+ milliseconds to under 10 milliseconds for cached content.

CDN caching typically covers static assets (images, CSS, JavaScript, fonts) and increasingly supports full page caching through edge workers. The CDN's cache is controlled through the same Cache-Control headers described above — the CDN respects these headers to determine how long to cache each resource before fetching a fresh copy from your origin server.

Cloudflare, the most widely used CDN, provides caching by default for static file types with sensible defaults. More advanced Cloudflare configurations (Cache Rules, Workers) enable full page caching at the edge, meaning even dynamically generated pages can be served from Cloudflare's edge nodes without reaching your origin server for the cache lifetime.

Application-Level Caching: Specific to Your Framework

Most web frameworks provide caching abstractions:

WordPress: The Transients API allows plugins and themes to store arbitrary data with an expiration. Used extensively by plugins to cache API responses, calculated values, and other data that's expensive to compute repeatedly.

Next.js: Has sophisticated built-in caching for server-rendered and statically generated pages, including Incremental Static Regeneration (ISR) — pages that are statically generated but can be regenerated in the background at specified intervals without a full rebuild.

Laravel, Django, Ruby on Rails: All provide caching abstractions that support various backend stores (Redis, Memcached, file system) for storing computed data, session data, and rendered fragments.

Cache Invalidation: The Hard Problem

There's a famous computer science adage: "There are only two hard things in computer science: cache invalidation and naming things." The joke is that cache invalidation — knowing when a cached piece of data is stale and needs to be refreshed — is genuinely difficult.

The challenge: you want caches to be as long-lived as possible (for maximum performance) but as fresh as possible (so visitors see current information). These goals are in direct tension.

Different types of content have different optimal cache strategies:

Immutable assets (versioned JS/CSS files, hashed image filenames): Cache for a year. When the file changes, the filename changes (a new hash is generated), so the URL changes, and the browser treats it as a completely new resource. Perfect cache efficiency with guaranteed freshness.

Content pages (blog posts, product pages): Cache for hours or days, with invalidation triggered by content updates. Most caching systems support "event-based" invalidation — when a post is saved, the cached version of that post is automatically purged.

Real-time data (stock prices, live scores, inventory levels): Short or zero cache lifetime. Some data simply can't be cached meaningfully because the value of freshness exceeds the value of caching speed.

Personalized content (user-specific pages, logged-in dashboards): Generally not cacheable in shared caches. Served fresh from the server (or cached privately in the browser) to prevent one user's data appearing in another's cache.

When Caching Causes Problems

Caching is powerful but creates a specific failure mode that every developer and site manager needs to understand: you make a change, you look at the site, and you see the old version. The change "didn't work." In reality, it did work — you're just seeing a cached copy of the old version.

This manifests in different contexts:

Browser cache: You updated a CSS file but your browser is still loading the old one. Solution: hard refresh (Ctrl+Shift+R or Cmd+Shift+R), which bypasses the browser cache and forces a full re-download. Or open the site in incognito/private mode.

Server/plugin cache: You updated page content on a WordPress site but visitors still see the old version. Solution: clear the page cache through your caching plugin's interface. Most plugins have a "Clear All Cache" button in the admin bar.

CDN cache: You updated your site but the CDN is still serving the old version to users in distant locations. Solution: purge the CDN cache through the CDN provider's dashboard or API. Cloudflare has a "Purge Cache" option in the dashboard, or you can purge specific URLs.

DNS cache: You changed a DNS record but your browser (or your ISP's resolver) is still returning the old IP address. Solution: flush your local DNS cache (varies by OS) or wait for the TTL to expire.

Understanding which layer is serving stale data is the key to fixing caching issues quickly. The diagnostic approach: check the source (is the change actually deployed?), then systematically clear each cache layer until the updated version appears.

Implementing Caching: Practical Starting Points

For WordPress: install WP Rocket (paid, most comprehensive) or LiteSpeed Cache (free, excellent). Enable page caching, browser caching, and Gzip compression. Connect your CDN. These three steps alone typically produce 50–80% performance improvements on uncached WordPress sites.

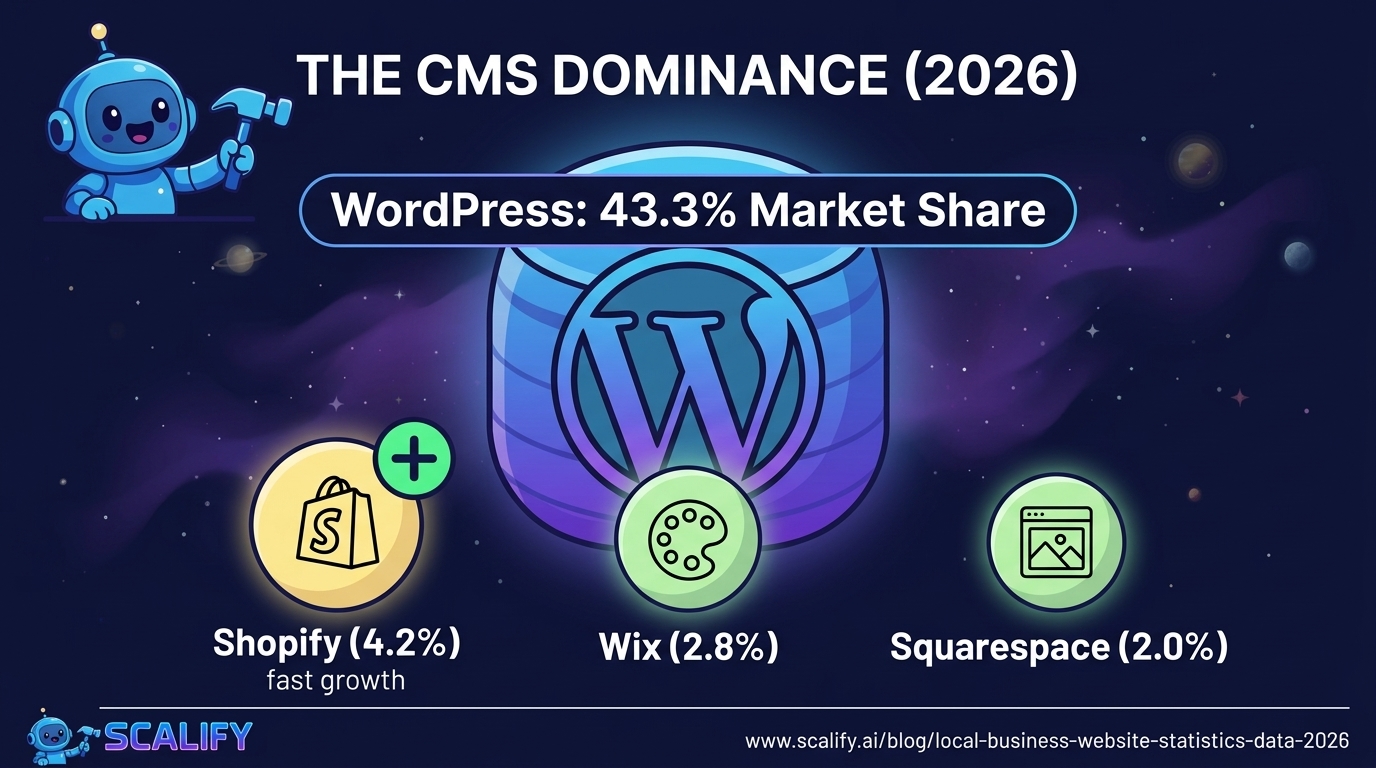

For Webflow, Shopify, Squarespace: caching is handled by the platform. Webflow serves all sites through Fastly CDN with appropriate cache headers. Shopify uses a similar CDN approach. You don't need to configure caching for platform-hosted sites — it's already there.

For custom sites: implement Cache-Control headers on your web server configuration. Use Redis for server-side object caching if your stack supports it. Add a CDN layer (Cloudflare is free at the basic tier). Implement versioned static asset filenames to enable long-lived browser caching without staleness concerns.

The Bottom Line

Caching is the practice of storing pre-computed results to serve faster than recomputing from scratch. It operates at every layer of your web stack: in browsers storing assets locally, in servers storing pre-rendered pages, in CDNs distributing assets globally, and in memory stores holding database query results. Getting caching right is one of the highest-impact performance improvements available — the difference between a site that loads in 3 seconds and one that loads in 0.5 seconds is often substantially a caching difference.

Understand which layer is relevant for your specific situation, implement appropriate cache lifetimes for different content types, and build a plan for cache invalidation when content changes. These three habits keep your site fast without serving stale content.

At Scalify, performance optimization — including caching configuration — is built into every website we deliver. Fast from launch, not fast after a separate optimization engagement.

.jpeg)

.jpeg)